Echoing Exciting Experiences

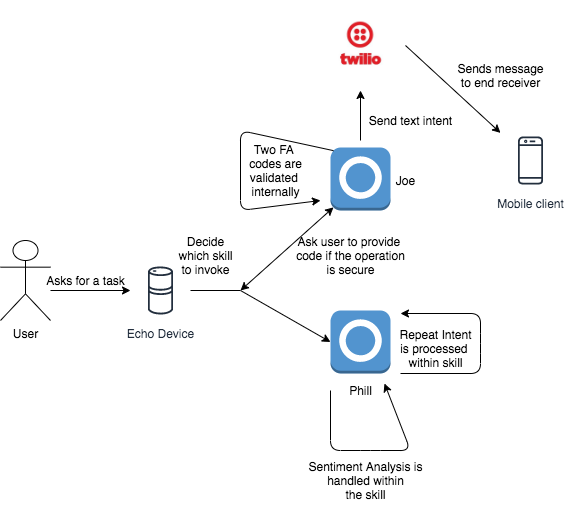

So a few years ago I began experimenting with building skills on the amazon alexa platform. I found the developer experience to be top-notch and the sdk’s provided to be easy to use. I created two skills name phill and joe. From my understanding developing a skill on the Alexa platform consists of 3 basic components.

Intents:

Intents are the VUI (voice user interface) equivalent of a software interface. They indicate the typical list of features and functionality to wish to accomplish from your Alexa Skill.

The above are the intents I had assigned to the skill Joe. Which are to perform sentiment analysis, Say a greeting, Send a text message, validate a Two-Factor Authentication code and perform a secure action.

Utterances:

Utterances are like the implementations of the above intents. They are essentially the product of applying context to intents to make them easier to understand and implement. Think of them as test cases for human interaction with our skill.

The above are utterances which basically train alexa to understand which intent to pickup when receiving a particular type of input.

The Actual Skill itself:

Alexa skill support a variety of execution backends. For ease of integration and convenience I chose to use AWS lambda. Attached below is the code in javascript for the skill joe.

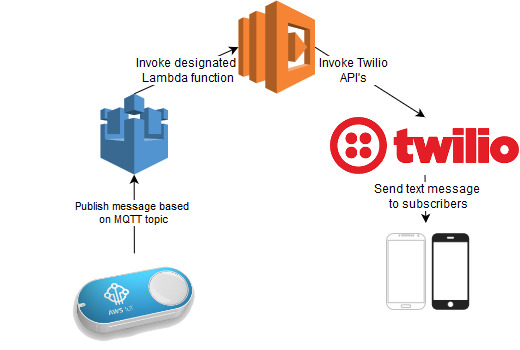

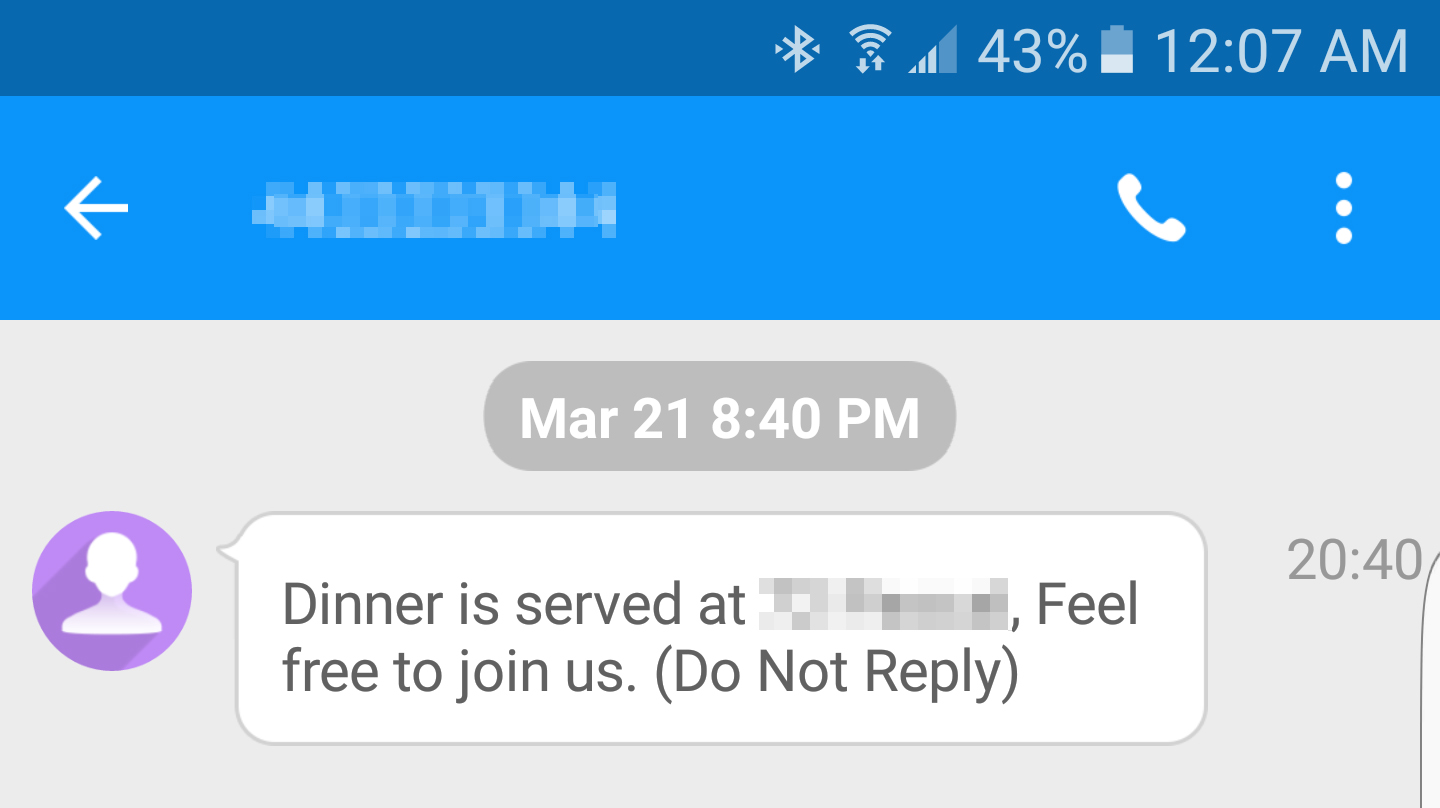

I made use of different client libraries for all the various intents I wanted to accomplish. Such as twilio for sending text messages. speakeasy for two-fa and a simple sentiment analyzer. Lambda allows for configuring environment variables which contained all my configurations in a separate env file that could be uploaded directly to aws.

Architecture Diagram

Scope for improvement:

- Try not to write a blog post about something you built 3 years ago. 😛

- Setting up CI/CD to simplify the development process

- Continue to have fun with whatever you are planning to achieve.